Most animals—including humans—have an instinctual aversion to cannibalism. (Notable exceptions include the praying mantis and hamsters.) From an evolutionary standpoint, this makes sense: eating your own kind reduces species success

AI has no such compunction. It will gobble up any data it’s given. So, what are developers feeding AI? Most commercial LLMs don’t disclose their datasets, but we do know that they can include pirated books, text from the web, social media posts, and private medical records. Some of these sources are ethically dodgy for privacy and copyright reasons. You’re probably part of these datasets, too (check the site haveibeentrained to see).

Generally, the more data, the better for AI. In machine learning, this principle is known as a scaling law, and it holds for data as well as compute in training LLMs. Google took an early lead in breaking machine learning benchmarks largely because it had access to more data than academic researchers and other competitors. Because of scaling laws, AI companies are sucking up all the data they can from every available source. As I’ve noted recently, this feeding frenzy suggests that we should be protective of student data.

But what happens when AI companies run out available human data sources, including the ethically dodgy ones? They turn to synthetic data sources—that is, data generated by AI.

That means that AI gets fed data generated by AI. The mad cow crisis of the 1990s has taught us that we should be better stewards of what we consume consumes. By extension, we might expect that feeding AI its own kind could have repercussions. But maybe it’s no surprise that a society that gleefully embraces a food product called Soylent will produce companies that encourage AI cannibalism.

Model collapse

In 2023, Ira Shumailov and colleagues examined the possible repercussions of AI models being fed AI content: “What will happen to GPT-{n} once LLMs contribute much of the language found online?” And they found that “use of model-generated content in training causes irreversible defects in the resulting models.” They called this problem the “curse of recursion” and coined the term “model collapse” to describe the defects that emerged from it. They argue: “the use of LLMs at scale to publish content on the Internet will pollute the collection of data to train them.” And they propose that the only solution to model collapse is to provide training access to human-authored content. So, on the bright side, humans are still useful!

There are (at least) two major problems with using synthetic data to train AI—and some potential benefits as well. The first problem is the one that Shumailov, et al. pointed out: AI models that eat their own content become less effective. The second problem is an increasing concern among those concerned with AI ethics: compounding bias in AI models. But synthetic data might also be useful for counteracting majority-group bias present in datasets used to train AI. In other words, it’s not so simple to dismiss synthetic data.

AI decreases model effectiveness

"Usually, when you train a model on lots of data, it gets better and better the more data you train on," explains computer and data scientist Julia Kempe. "This relation is called a 'scaling law' and has been shown to hold both empirically in many settings, and theoretically in several models.” In a paper on model collapse, Kempe and colleagues demonstrate that “when a model is trained on synthetic data…its performance does not obey the usual scaling laws.” Instead, it performs worse for each iteration of training with synthetic data.

The advantage of larger datasets is, in part, diversity. More data means more long tails are represented, more edge cases, more statistical anomalies. LLMs can refer to those data “tails” as it generates content, diversifying its output and making it less anodyne, less generic. But its output still tends to shave off those spiky, interesting bits because it’s less likely to sample from low-probability events. As models get fed that slightly smoothed-out content in progressive training, Martin Breisch and colleagues observed “that the self-consuming training loop produces correct outputs, however, the output declines in its diversity.” Similarly, Shumailov, et al. found that LLMs tend to “forget” their data tails. They describe “a degenerative process whereby, over time, models forget the true underlying data distribution.”

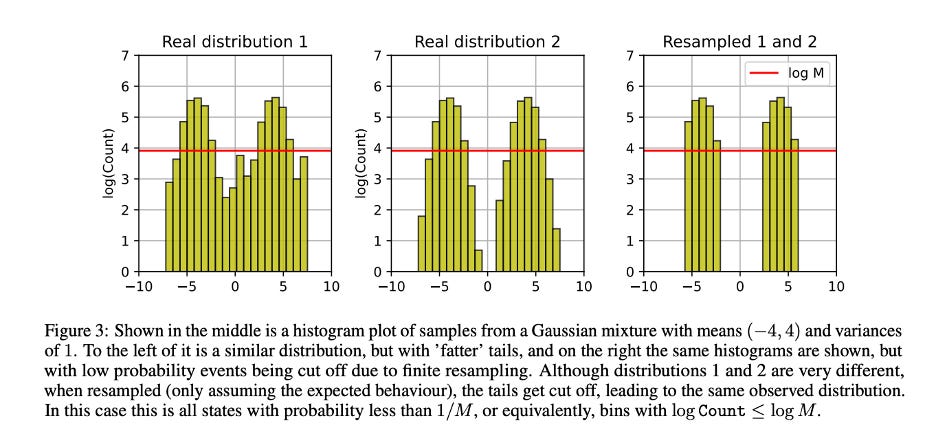

Figure 3 in the Shumailov, et al. paper shows what this looks like.

Note how the distribution of data below the red line is different in the first and second examples, and in the resampled 3 example, the data below the red line is absent. The data below the red line represent the “tails.” All three distributions look the same above the red line—the sampled data—even though their underlying distribution is different. I think of it like an iceberg—we only see the tip in the samples, but much of the interesting stuff is happening below the surface and it’s dangerous to ignore it.

Compounding bias in AI

Consider all of those low-probability events that get lost in each iteration of training with synthetic data. Those are underrepresented data, which tend to matter most to underrepresented and marginalized groups. So, another problem with model collapse is compounding bias in AI.

AI ethics expert Anika Collier Navroli writes that bias can also be compounded when hallucinations and biased output of AI is fed back into it. “The pathway of training new systems with synthetic data would mean constantly feeding biased and inaccurate outputs back into the system as new training data. Without intervention, this cycle ensures that the system will only double down on its own biases and inaccuracies.” She calls this “a feedback loop to hell.”

Angelina Wang and colleagues point out another problem with synthetic data from LLMs—the problem of using AI-generated data in social science research. Apparently, it’s become somewhat common practice to balance underrepresentation of certain demographics by generating synthetic people from that group and running “them” through an opinion survey or user study. Really! I mean, points for trying I guess, but wow, who would have thought this would be a problem??

Wang et al.’s paper is helpfully titled with their thesis: “Synthetic data, synthetic people: Large language models cannot replace human participants because they cannot portray identity groups.” The use of LLM-generated responses tends to both misportray and flatten identity groups.

Misportrayal can happen because very little data expressing opinions is explicitly connected with the accurate demographics of an author, e.g., “As a [identity group], I believe….” The authors note that identity-connected opinions are at least as likely to be from the perspective of a member of an out-group. For example, opinions about autism and autistic identity may be more likely to come from non-autistic people. This means that LLMs could represent minoritized groups more from the majoritarian perspective. Any research purporting to represent these demographics with synthetic people would be inaccurate because the LLM is sampling from both in-group and out-group opinions.

Flattening an identity group means that an LLM is more likely to erase subgroup variation and produce stereotypical members of that group. Models are rewarded during training for producing more likely results—which can mean narrowing the range of permissible responses. Wang, et al. note that “this is especially harmful in the context of flattening demographic groups with a history of being portrayed one-dimensionally (e.g., Black people).”

Is synthetic data all bad?

These examples suggest that synthetic data can only exacerbate bias in LLMs. But Thomas Hartvigsen and colleagues have produced a synthetic dataset that is designed to decrease bias against minoritized groups in AI model output.

I learned about this dataset, Toxigen, in a talk at University of Pittsburgh by one of the authors of the papers, computer scientist Saadia Gabriel. As she noted in the talk, filtering for toxicity in datasets tends to censor communication about and within minoritized groups. Because there is so much hate speech associated with certain groups, human annotators and automatic filters often mislabel benign or in-group communication in these communities as toxic. And some, more subtle statements of racism don’t get picked up if they just imply rather than name an identity group. Toxigen is a “a new large-scale and machine-generated dataset of 274k toxic and benign statements about 13 minority groups,” designed to more accurately train models to distinguish between benign and toxic statements.

Apparently, female praying mantises who have eaten their male partner produce more eggs. So, evolutionarily speaking, cannibalism benefits both male and female mantises. Could synthetic data have similar benefits to subsequent generations of AI, even if the direct harms seem obvious?